From DeepSeek V3 to V3.2: Architecture, Sparse Attention, and RL Updates

Last updated: January 1st, 2026

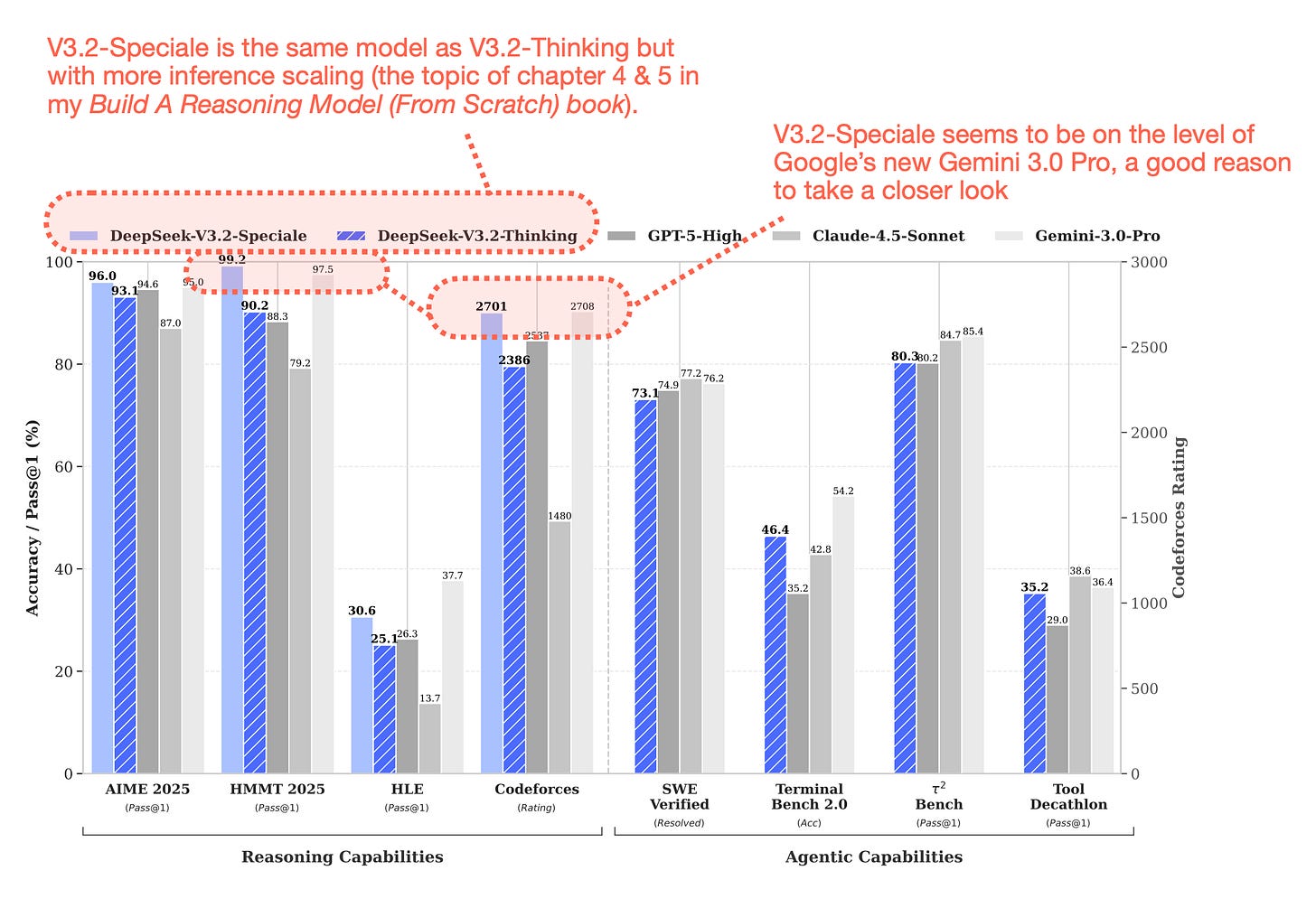

Similar to DeepSeek V3, the team released their new flagship model over a major US holiday weekend. Given DeepSeek V3.2’s really good performance (on GPT-5 and Gemini 3.0 Pro) level, and the fact that it’s also available as an open-weight model, it’s definitely worth a closer look.

I covered the predecessor, DeepSeek V3, at the very beginning of my The Big LLM Architecture Comparison article, which I kept extending over the months as new architectures got released. Originally, as I just got back from Thanksgiving holidays with my family, I planned to “just” extend the article with this new DeepSeek V3.2 release by adding another section, but I then realized that there’s just too much interesting information to cover, so I decided to make this a longer, standalone article.

There’s a lot of interesting ground to cover and a lot to learn from their technical reports, so let’s get started!

1. The DeepSeek Release Timeline

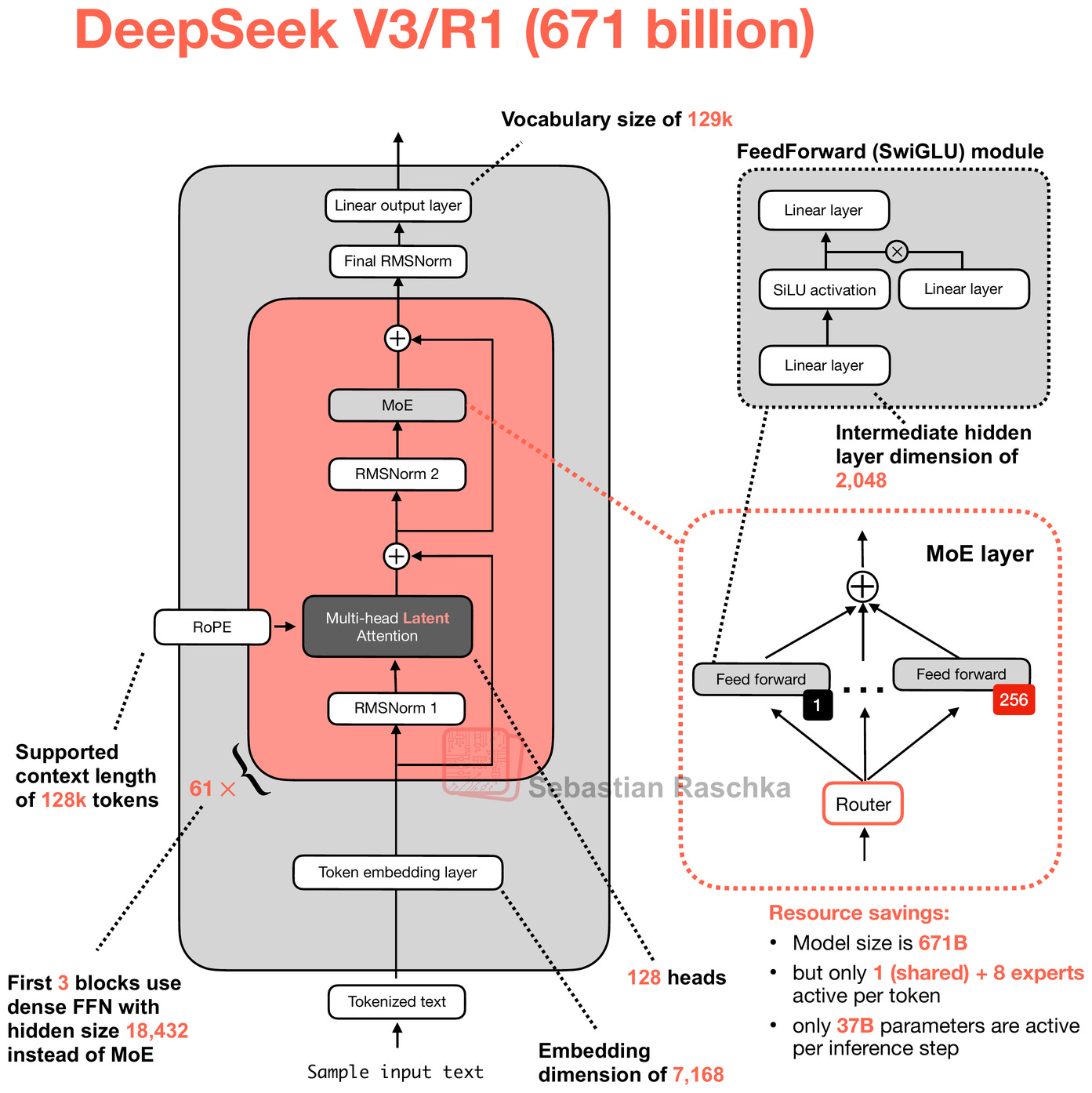

While DeepSeek V3 wasn’t popular immediately upon release in December 2024, the DeepSeek R1 reasoning model (based on the identical architecture, using DeepSeek V3 as a base model) helped DeepSeek become one of the most popular open-weight models and a legit alternative to proprietary models such as the ones by OpenAI, Google, xAI, and Anthropic.

So, what’s new since V3/R1? I am sure that the DeepSeek team has been super busy this year. However, there hasn’t been a major release in the last 10-11 months since DeepSeek R1.

Personally, I think it’s reasonable to go ~1 year for a major LLM release since it’s A LOT of work. However, I saw on various social media platforms that people were pronouncing the team “dead” (as a one-hit wonder).

I am sure the DeepSeek team has also been busy navigating the switch from NVIDIA to Huawei chips. By the way, I am not affiliated with them or have spoken with them; everything here is based on public information. As far as I know, they are back to using NVIDIA chips.

Finally, it’s also not that they haven’t released anything. There have been a couple of smaller releases that trickled in

...This excerpt is provided for preview purposes. Full article content is available on the original publication.