Mantic Monday: Groundhog Day

Having Your Own Government Try To Destroy You Is (At Least Temporarily) Good For Business

On Friday, the Pentagon declared AI company Anthropic a “supply chain risk”, a designation never before given to an American company. This unprecedented move was seen as an attempt to punish, maybe destroy the company. How effective was it?

Anthropic isn’t publicly traded, so we turn to the prediction markets. Ventuals.com has a “perpetual future” on Anthropic stock, a complicated instrument attempting to track the company’s valuation, to be resolved at the IPO. Here’s what they’ve got:

Upon the “supply chain risk” designation, predicted value at IPO fell from about $550 billion to $475 billion - then, after a day or two, went back up to $550 billion. No effect!

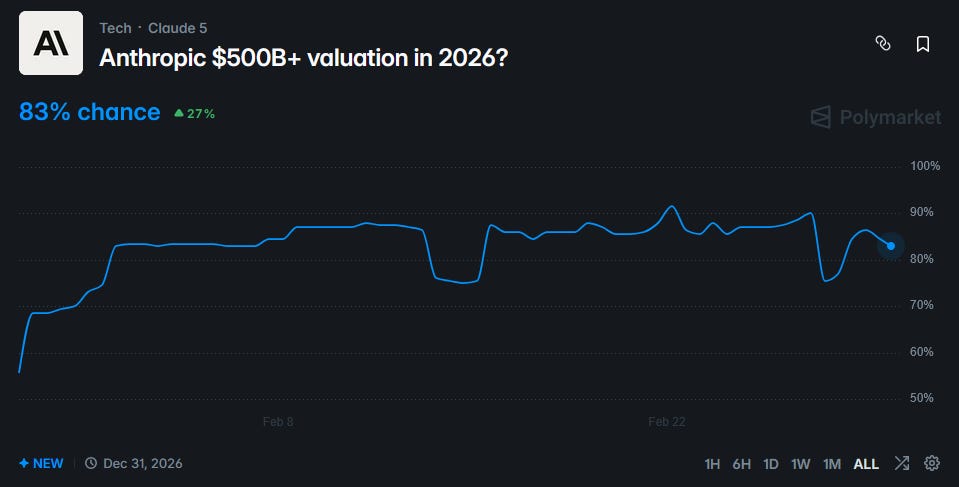

A coarser yes-no Polymarket tells the same story:

The chance of Anthropic getting a $500 billion+ valuation in 2026 fell from 90% to 76%, before rebounding to 83%.

Why have the markets shrugged off this seemingly important event?

Partly it’s because Anthropic seems likely to win on appeal. Hegseth has said the government will keep using Anthropic for the next six months (undermining his case that they’re a national security risk) and has signed a substantially similar contract with OpenAI (undermining his case that their contract terms were unworkable). The prediction markets think the courts will be sympathetic:

But even in the 28% of timelines where the designation sticks, things don’t seem so bad. Secretary of War Hegseth originally tweeted that:

In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.

Framed this way, the Pentagon’s actions sound devastating. Anthropic relies on compute to train and run its AIs. Most of this compute is in data centers owned by Amazon, Google, and Microsoft. At least Amazon and Microsoft have contracts with the US military. If they had to drop Anthropic, it would make it impossible for the company to stay a frontier AI lab.

But in their own blog post, Anthropic described the situation differently:

...If you are an individual customer or hold a commercial contract with Anthropic, your access to Claude—through our API, claude.ai, or any of

This excerpt is provided for preview purposes. Full article content is available on the original publication.