Next-Token Predictor Is An AI's Job, Not Its Species

Deep Dives

Explore related topics with these Wikipedia articles, rewritten for enjoyable reading:

-

Predictive coding

1 min read

Linked in the article (21 min read)

-

Reinforcement learning from human feedback

13 min read

Related to "Next-Token Predictor Is An AI's Job, Not Its Species" (36 min read)

-

Evolutionary algorithm

6 min read

Related to "Next-Token Predictor Is An AI's Job, Not Its Species" (13 min read)

I.

In The Argument, Kelsey Piper gives a good description of the ways that AIs are more than just “next-token predictors” or “stochastic parrots” - for example, they also use fine-tuning and RLHF. But commenters, while appreciating the subtleties she introduces, object that they’re still just extra layers on top of a machine that basically runs on next-token prediction.

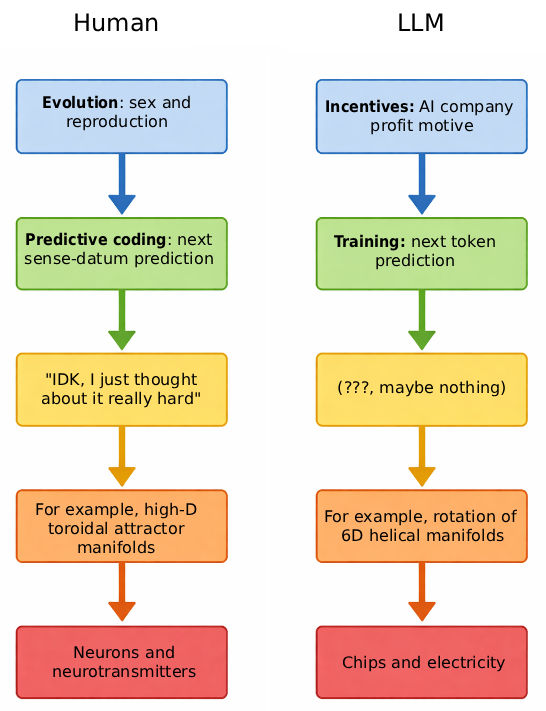

I want to approach this from a different direction. I think overemphasizing next-token prediction is a confusion of levels. On the levels where AI is a next-token predictor, you are also a next-token (technically: next-sense-datum) predictor. On the levels where you’re not a next-token predictor, AI isn’t one either.

Putting all the levels in graphic form:

II.

The human brain was designed by a series of nested optimization loops. The outermost loop is evolution, which optimized the human genome for being good at survival, sex, reproduction, and child-rearing.

But evolution can’t encode everything important in the genome. It obviously can’t include individual and cultural features like the vocabulary of your native language, or your particular mother’s face. But even a lot of things that could be in there in theory, like how to walk, or which animals are most nutritious, are missing - the genome is too small for it to be worth it. Instead, evolution gives us algorithms that let us learn from experience.

These algorithms are a second optimization loop, “evolving” neuron patterns into forms that better promote fitness, reproduction, etc. The most powerful such algorithm is called predictive coding, which neuroscience increasingly considers a key organizing principle of the brain. Wikipedia describes it as:

In neuroscience, predictive coding (also known as predictive processing) is a theory of brain function which postulates that the brain is constantly generating and updating a “mental model” of the environment. According to the theory, such a mental model is used to predict input signals from the senses that are then compared with the actual input signals from those senses.

In other words, the brain organizes itself/learns things by constantly trying to predict the next sense-datum, then updating synaptic weights towards whatever form would have predicted the next sense-datum most efficiently. This is a very close (not exact) analogue to the next-token prediction of AI.

This process organizes the brain into a form capable of predicting sense-data, called a “world-model”. For example, if you encounter a tiger, the best way of

...This excerpt is provided for preview purposes. Full article content is available on the original publication.