New Paper: Towards a science of AI agent reliability

By Stephan Rabanser, Sayash Kapoor, Arvind Narayanan

Suppose you hear about a new AI agent for improving productivity — by making purchases, or writing code, or sending emails, or handling a customer on your behalf. Should you trust it? Can the agent do the job reliably enough? After all, there are many horror stories of agents going wrong.

Surprisingly, even though the lack of reliability of AI agents is well known, right now the AI industry doesn’t have good tools for measuring reliability, or even a good definition of reliability.

Arvind and Sayash have long been thinking about this. Last fall, we were joined by postdoctoral researcher Stephan Rabanser, whose PhD looked at the reliability question in simpler, more traditional AI systems. We recruited a few other independent researchers, and have released what we hope is a comprehensive measurement of reliability. Our draft paper is called Towards a Science of AI Agent Reliability.

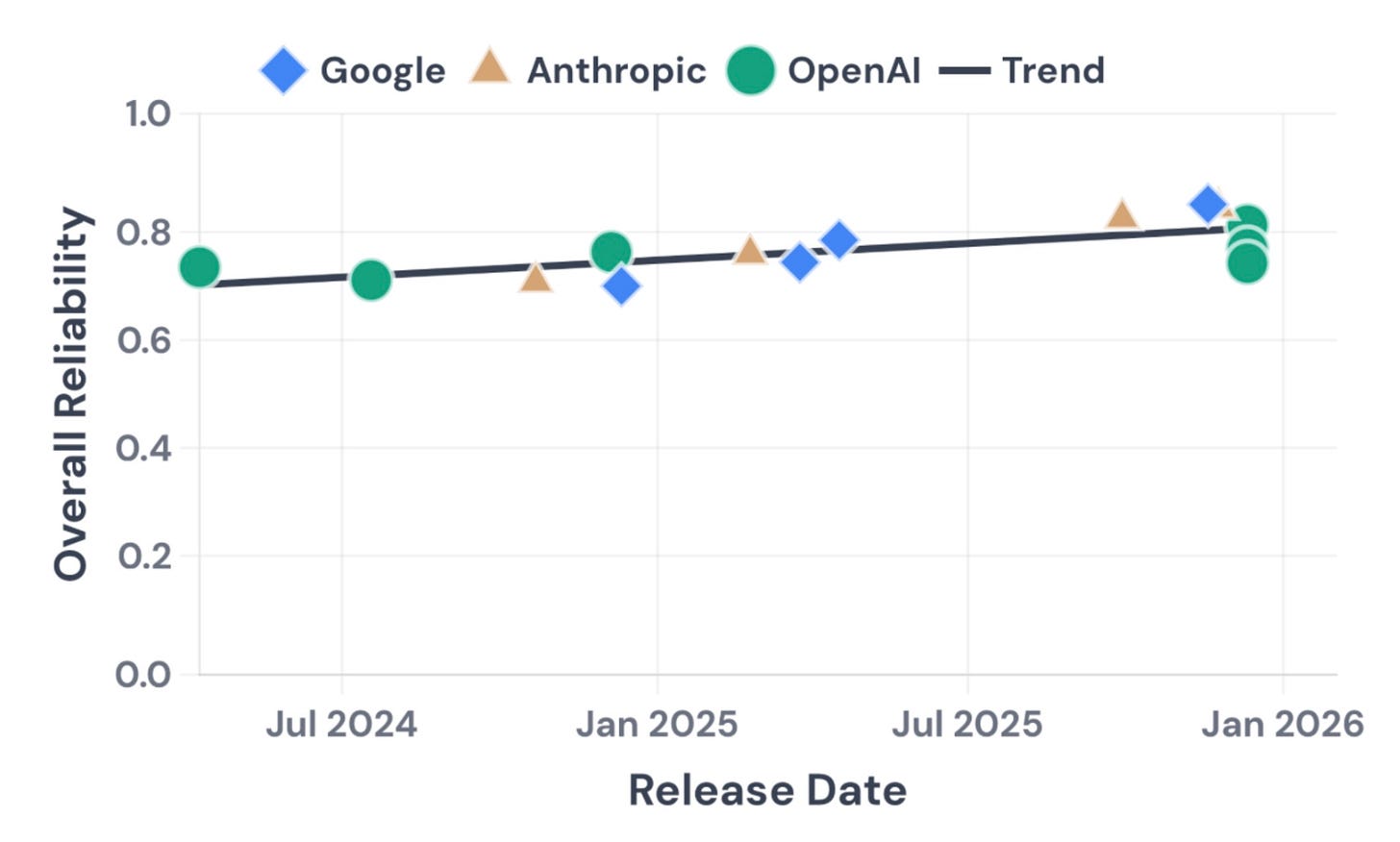

We borrowed insights from many other fields, such as nuclear and aviation safety. We were able to decompose reliability into 12 different dimensions. Evaluating 14 models on two complementary benchmarks, we found that nearly two years of rapid capability progress have produced only modest reliability gains. See our interactive dashboard here.

While our findings are tentative at this stage, we hope they can help explain the puzzlement among many in the industry as to why the economic impacts of AI agents have been gradual, even though they are crushing capability benchmarks.1 To help the community track reliability systematically, we plan to launch an AI agent “reliability index”. We hope this will stimulate researchers and industry to invest effort into improving reliability.

Table of Contents

Accuracy isn’t enough: four dimensions of reliability

When we consider a coworker to be reliable, we don’t just mean that they get things right most of the time. We mean something richer:

They get it right consistently, not right today and wrong tomorrow on the same thing (Consistency)

They don’t fall apart when conditions aren’t perfect (Robustness)

They tell you when they’re unsure rather than confidently guessing (Calibration)

When they do mess up, their mistakes

This excerpt is provided for preview purposes. Full article content is available on the original publication.