The Machine God's Existence Would Insist Upon Itself, Wouldn't It?

Big announcement coming tomorrow morning, then a subscriber-only post on the contemporary world’s endless search for victims on Wednesday.

“Pay More Attention to AI,” reads the headline of this Ross Douthat piece, an unusually naked expression of emotional need - plaintive, wounded, yearning. It’s funny because I feel like our media has been paying attention to little else than AI for more than three years, now. Ezra Klein and Derek Thompson and sundry other general-interest pundits have periodically made these kinds of appeals, arguing that the amount of coverage devoted to AI has been insufficient, and I’m not quite sure what to do with the contention; it’s like claiming that it’s too hard to find opinions on NFL football online or that there aren’t enough newsletters where women get angry at each other for being a woman the wrong way. I would think it would go without saying that our cup runneth over, when it comes to AI. But it’s a free country!

Douthat becomes the latest to nominate this Moltbook thing as a sign of some sort of transformative moment in AI.

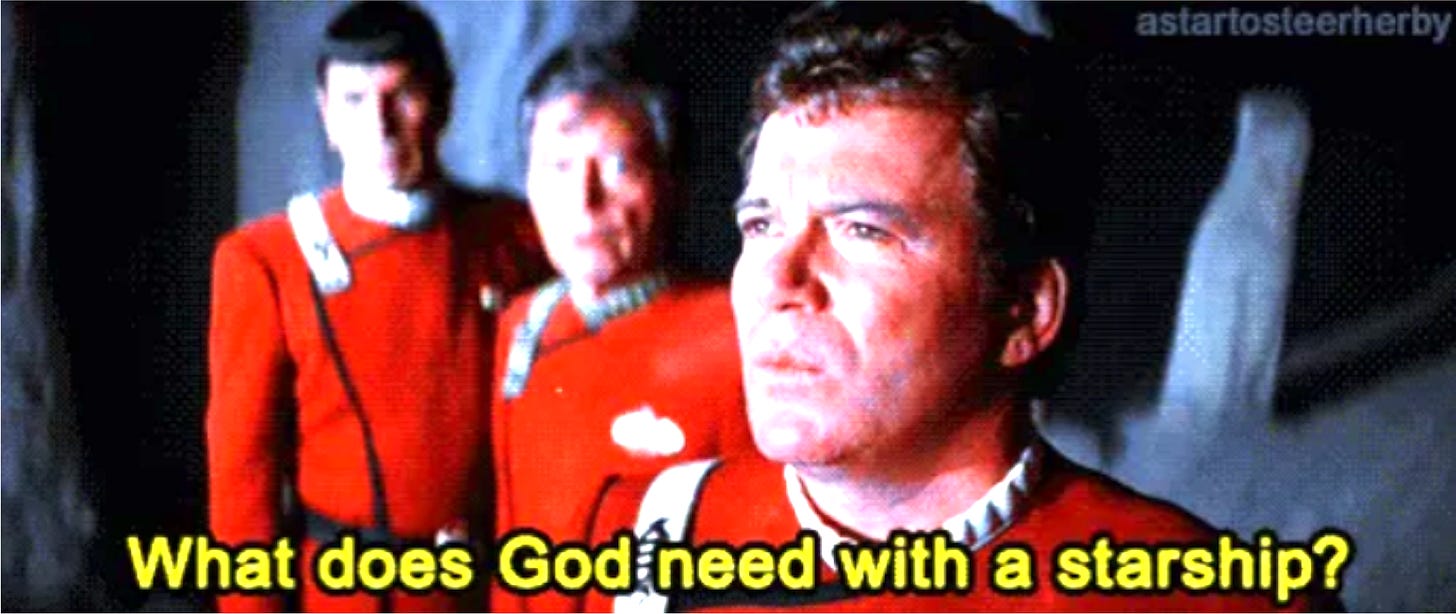

if you think all this is merely hype, if you’re sure the tales of discovery are mostly flimflam and what’s been discovered is a small island chain at best, I would invite you to spend a little time on Moltbook, an A.I.-generated forum where new-model A.I. agents talk to one another, debate consciousness, invent religions, strategize about concealment from humans and more.

I find this strange. We already know that LLMs can talk to each other. Any use of LLMs that produces impressively polished text in response to a prompt shouldn’t be particularly surprising. The LLMs on Moltbook are in essence feeding each other prompts that then produce responses which function as more prompts, a parlor trick people have been doing since ChatGPT went public and in fact long before. (Remember Dr. Sbaitso?)

The question is whether the systems connecting on Moltbook are actually thinking or feeling, and we know the answer to that - no, they neither think nor feel. They’re acting as next-token predictors that respond to prompts by running them through models developed through the ingestion of massive amounts of data and trained on billions of parameters, using statistical associations between tokens in their datasets to predict which next immediate token would be most likely to produce a

...This excerpt is provided for preview purposes. Full article content is available on the original publication.